Testing a software application is no longer as simple as ensuring that its features work correctly. The importance of scalability testing cannot be ignored as publicly available applications can be accessed by anyone, at any time, from anywhere in the world. No longer are you concerned with how your applications perform locally. Now you must ensure your application is reliable from multiple locations around the world, from different devices, network conditions, and performs seamlessly as the number of users increases and decreases over time. Where scalability testing was once a ‘nice to have,’ from a software development perspective, it has evolved into a ‘must have’ due to user demands and the natural evolution of the modern day Internet.

While the features of an application need to work smoothly and flawlessly, users can be more affected by its stability and responsiveness. Performance testing is an essential aspect of non-functional testing. There are many flavors of performance testing that become necessary depending on the type of usage expected on that specific application. Let us look at the process of performance testing in detail next.

What is Web Application Performance Testing?

Performance testing refers to analyzing things like the speed, responsiveness, scalability, and stability of an application under varying usage (stress) levels. To do this, developers can induce periods of higher usage artificially through manual methods or specific performance testing tools. We’ll be looking at some of them later in this article.

There are mainly three types of performance tests. The primary method of testing the performance of an application is to apply varying load levels and analyze its performance.

Load testing

Load testing provides detailed insights into how the application fares with different amounts of usage. Sudden spikes of usage are also induced to ascertain how applications respond and monitor how infrastructure scale along with it. Innovative Load testing tools like LoadView allow analyzing applications based on traffic from distributed geographical locations. This type of testing can be essential for a global user base.

Endurance Testing

Endurance testing is another useful type of test where an application is subjected to higher loads for extended periods. The primary benefit of Endurance testing is to identify problems like memory leaks, which can be caused by extended stints of high usage and other weaknesses in infrastructure.

Stress Testing

Stress testing became popular with the concept of software resilience engineering. It allows developers to identify the point at which applications (or one or more of their components) fail due to extremely high usage. While pushing an application, or system to the breaking point may seem counterintuitive to those not familiar with software resilience engineering, it provides developers and tester with insight into exactly how much load, or stress, a system can endure before it crashes. Undoubtedly, failures are going to happen, and it is best to be prepared for it. Stress testing will also demonstrate how your system responds and recovers. Stress testing may also show that infrastructure and capacity investments are necessary.

Let’s say you are going to launch a new product and marketing campaign and you’ve estimated the traffic that will be generated to your site and applications. If during your stress test, your application fails earlier than anticipated, this is an indication that more system resources are likely necessary to handle the planned levels of incoming traffic.

What is Scalability Testing?

In comparison to performance testing, scalability testing refers to analyzing how a system responds to changes in the number of simultaneous users. Systems are expected to scale up or down and adjust the amount of resources being utilized to ensure that users experience consistent and stable performance despite the number of simultaneous users.

Scalability testing can also be done on hardware, network resources, and databases to see how they respond to varying numbers of simultaneous requests. In contrast to load testing, where you analyze how your system responds to various load levels, scalability testing analyzes how well your system scales in response to various load levels. The latter is especially important in containerized environments.

The Performance Testing Process

Many factors determine the type and quantity of performance testing required by each application. However, these are some general steps that will put you on the right path.

Establish Baselines

A baseline must be established so that the outcomes of any process can be measured. Performance testing is no different. Developers can perform basic tests to identify the maximum load that can be accommodated by the application without affecting response times and stability. The baseline can then be documented and compared with future tests. Baselines are especially useful in case improvements and/or corrective actions are to be performed.

Some developers maintain a staging application with specifications and configurations identical to the production environment and compare it to the improved instance. The benefit of this approach is that new tests can be executed in both environments so that previously unidentified scenarios can also be covered.

Waterfall Charts

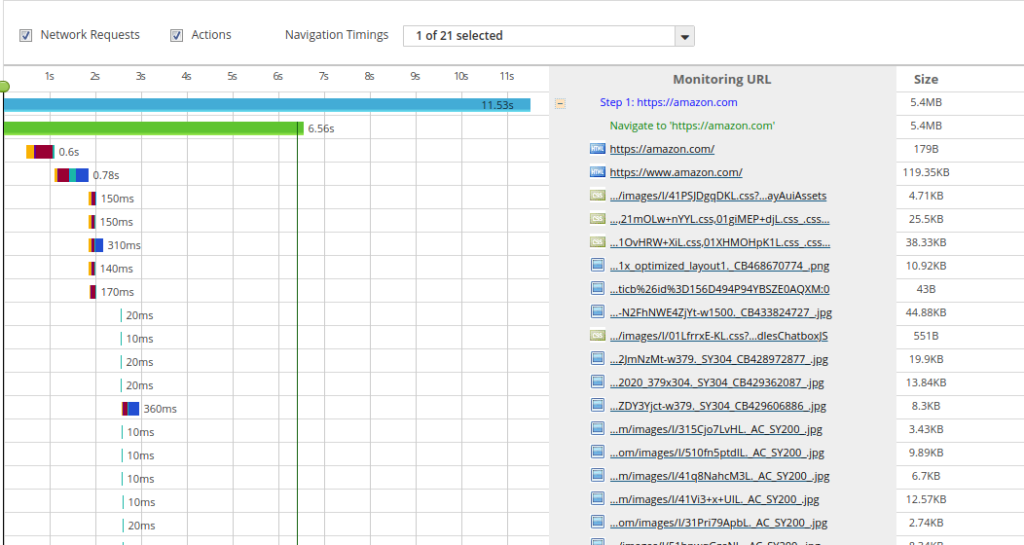

This step is performed at various stages of the performance optimization process. However, its primary purpose is to identify the components or functions of an application that are relatively slower than others. These areas must be identified so that remedial action can be applied specifically to them.

A detailed waterfall analysis will produce the breakdown of time consumed by each aspect of a request to an application, such as DNS, time till the first packet, and SSL.

Performance Testing

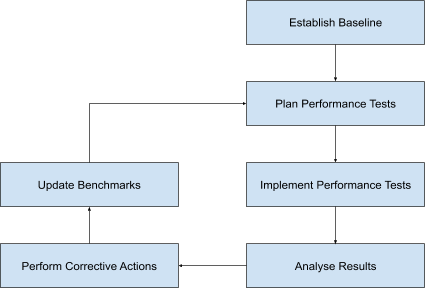

The important thing to remember about performance testing is that it is a continuous process. The usage of an application can be expected to increase with time and requires regular attention. The Performance testing process can be summarized as follows:

Once the benchmarks are established, the next step is to plan the tests. The amounts of load applied with each test will depend on a scale with a specific number of levels (1X-10X). Other factors, such as the type of usage/function and geographic dispersion of requests, can also be considered based on the circumstances.

Thereafter the tests can be executed. Depending on the size and the complexity of its functions, testing can be done manually or through a third-party tool like LoadView. These tools will allow developers to record sequences of actions that will then be replicated by the platform in larger quantities to imitate higher loads.

Once the results are analyzed, it will be possible to identify the areas of the application that are causing delays or instability. Performance testing platforms provide many types of reports, such as the best and worst load times, detailed data of individual requests, waterfall charts, and error reports. The latter can be important to identify runtime errors that don’t usually occur with functional testing.

Identify Architecture Bottlenecks

Memory leaks are one of the most annoying problems for developers. They don’t happen consistently and are relatively difficult to identify. But these are not the only type of issues that can crop up. CPU, I/O, and network are some of the other areas that can get affected. Most modern applications use containerized environments. While many of these Container Orchestration platforms provide many forms of auto-scaling, infrastructure can always cause bottlenecks.

Corrective Action

Corrective actions can be two-fold. Firstly, it is vital to address all performance issues identified in the application concerning its features. These can be optimized both in code as well as database interactions. Infrastructure bottlenecks can be resolved quickly by adjusting the amount or types of hardware devices allocated to your application. However, this is only possible to a certain extent, both due to physical limitations as well as financial restrictions. More complex scenarios may require changes to load balancing settings and decentralizing servers to regional data centers.

Once these actions have been completed, the next step is to execute the Performance tests again. This is necessary so that the remedial actions applied can be validated and quantified. These new results can then be compared against the baseline and be benchmarked with external applications. The results of the comparison can indicate to what extent the previously present bottlenecks and delays are available.

The process starts all over again after that. Baselines and performance tests can be updated, and new tests can be planned.

Performance Testing vs. Scalability Testing: Conclusion

This article takes a brief look at the performance testing process for software applications. The steps discussed are generalized to suit the majority of scenarios. However, specific applications may require attention in particular areas. We also looked at a few tools that can be used to perform the actual Performance tests. While it is not impossible to manually perform these tests, it is a lot more efficient to use a purpose-built platform. Learn more about LoadView and how to perform load tests for your websites, applications, APIs, and more.

Sign up for the free trial today and get up to 5 free load tests to get started!