For a long time now, organizations have been designing and developing software systems and improving upon them year after year. Several architectures, technologies, and design patterns have evolved over the same time to keep up with the continuous changes. Microservices, or more commonly known as microservice architecture, is one of the architectural platforms emerged from the world of scalable systems, continuous development, polyglot programming, and decoupled applications. Microservices segregate the platform with the help of service boundaries, which makes it easier to deploy and maintain each service individually. Since each module is independent of each other, adding new features or scaling service becomes a lot easier and efficient.

What are Programming Paradigms?

As the microservice architecture grows with business, it can make the system more complex, especially if it is not maintained and scaled, and ignoring any of the programming paradigms. Paradigms are not languages, rather a programming style. And as you are probably aware, there are a variety of programming languages, but all of them need to follow a specific approach, which are referred to as paradigms.

While ignoring programming paradigms sometimes lends itself to inventing new ways and methods of programming, sometimes it is best to follow the rules and avoid any potential issues. Some of the more common types of programming paradigms include imperative programming, structured programming, object-oriented programming, and declarative programming. Also, testing the overall functionality of multiple services is a lot more difficult due to the distributed nature of application, which demands a strategy for load testing microservices and finding loopholes and bottlenecks among systems.

What are Microservices?

Before we start discussing microservices and considerations when load testing, let’s understand briefly about microservices, its features, and benefits it possesses. Microservices are based on a very simple principle, single responsibility principle. Taking this term forward, microservices extend it to loosely couple services which can be developed, deployed, and maintained independently. It is about decomposing software development systems into autonomous units which are independently deployed, and they communicate with each other via interface which is language agnostic and combined they solve a business problem statement.

Key Features of Microservices

- Each single unit or service is an independent, lightweight and loosely coupled.

- Each service has its own code base which is developed and managed by a small team.

- Each service can pick its own technology stack based on the problem statement they are solving.

- Services has their own DevOps plan (develop, test, release, deploy, scale and maintain independently)

- Services communicate with each other by using APIs and REST protocols over the internet.

- Each service has its own mechanism to persist its data, the way it best works for it.

Benefits of Microservices

- Independent development. Developers are free to choose technology stacks. As microservices are units which serve a single goal, each service has its own codebase which is developed and tested by a small focused team which results in enhanced productivity, innovation and quality.

- Independent releases. Any bug fixes or change is easy and less risky. Service can be unit tested individually.

- Independent deployments. We can update a microservice without affecting the overall application.

- Development scaling. We can scale a system horizontally, meaning adding multiple instances of the same microservice at will and based on traffic.

- Graceful Degradation. If one of the services goes down, microservice helps to not propagate it to make the entire application down. It helps in removing catastrophic failures in the system.

Drawbacks of Microservices

- As each service is hosted independently, monitoring and maintainability tools for each service is required.

- Overall application design can impact performance as network overhead plays a role. Each service is communicating via API calls, which demands caching and concurrency.

- Application-level tests are required to ensure new changes do not impact the previous functionalities.

- Each service has its own release workflow, release plan, and release cycles. Therefore, they require high maintenance and deployment workflow automation.

Performance Testing of Microservices

As we have seen briefly with advantages of microservices, it also possesses complex challenges to cater. As multiple services are interacting with each other with REST-based endpoints, the performance degradation can impact a business to sink. For example, an ecommerce application with 100ms shaved off on its product listings or shopping cart, can directly impact the bottom line of order placement. Or for an event-driven product with regular interaction between customers, even a delay of a few milliseconds can irritate the customer and could cause them to go elsewhere. Whatever the case may be, performance and reliability is the important part of software development, so it is critical that businesses invest in performance testing and spend the necessary time and effort into it.

Load Testing Microservices with LoadView

Determining the right microservice load testing tool can help you ensure the best quality of software and deliver a product that wins the market. The early adopters of microservice architecture who’ve scaled have already achieved the competitive advantage. LoadView is one of the only real browser-based load testing tools for websites, web apps, and APIs. It generates user traffic from around the world to gain insight into the performance of your systems under load. Let’s look forward for the steps to run load test for microservice Rest API endpoints using LoadView:

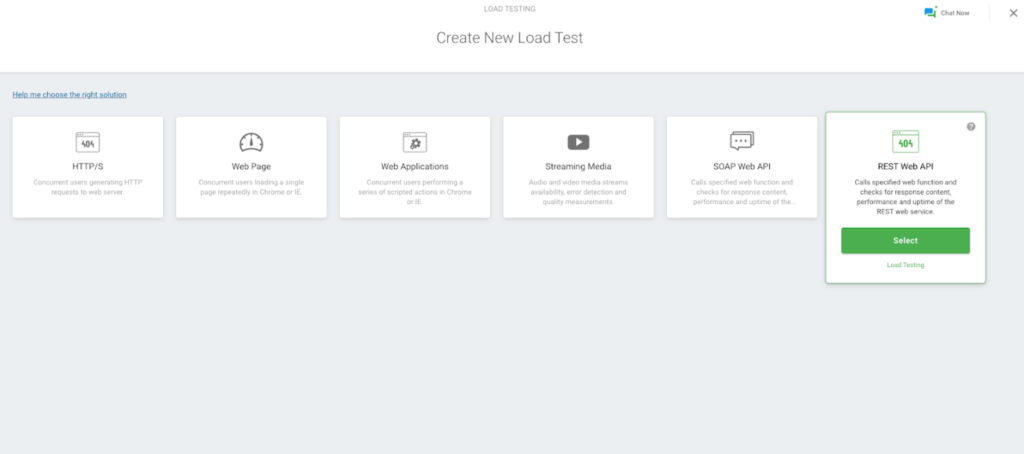

- Open the LoadView testing page. We will select Create New Load Test.

- From this window, you will see multiple types of tests available via LoadView, like web applications, websites, and APIs, etc. For the example here, we will select the REST Web API option to run load tests for REST API endpoints.

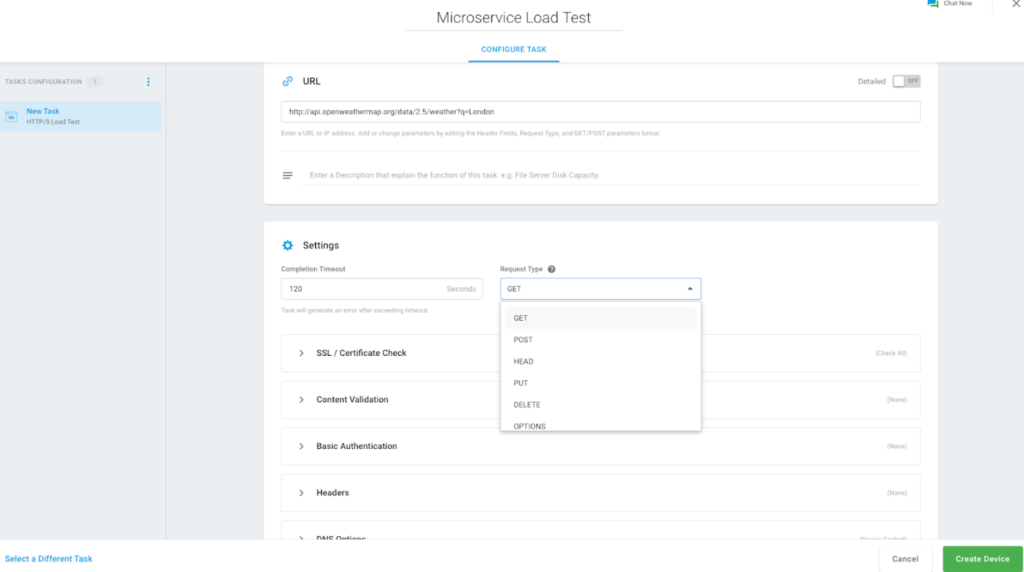

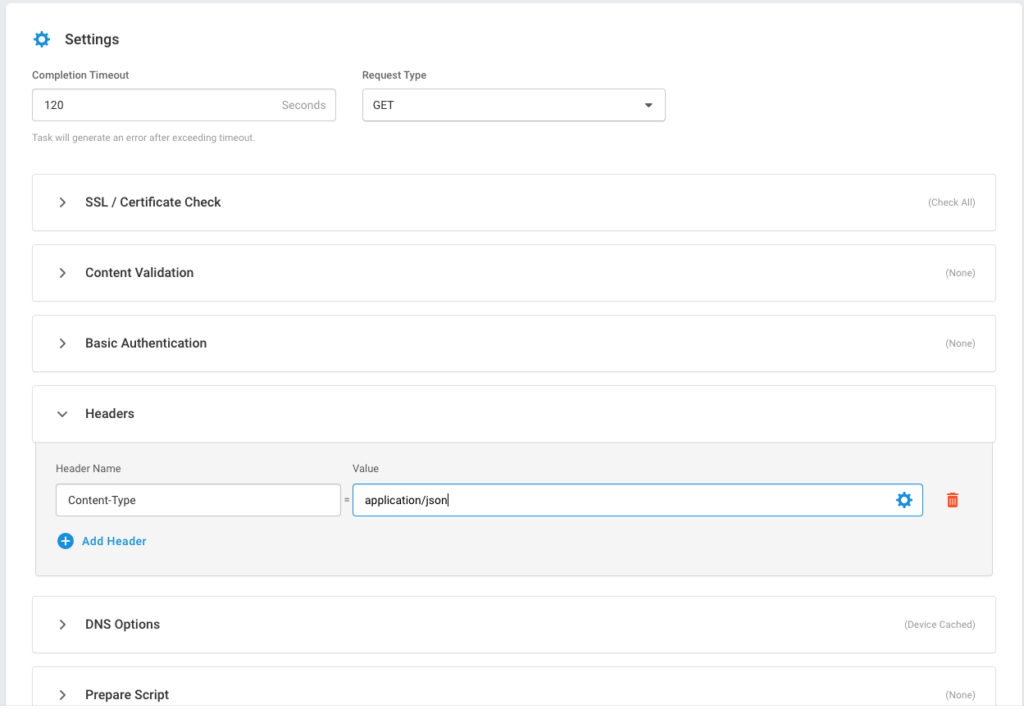

3. A new window will pop up where we need to add all the REST API endpoints with hostname, request headers, request type, authentication tokens, and request payload. We can add multiple APIs here as well. Once done, we need to select the Create Device button.

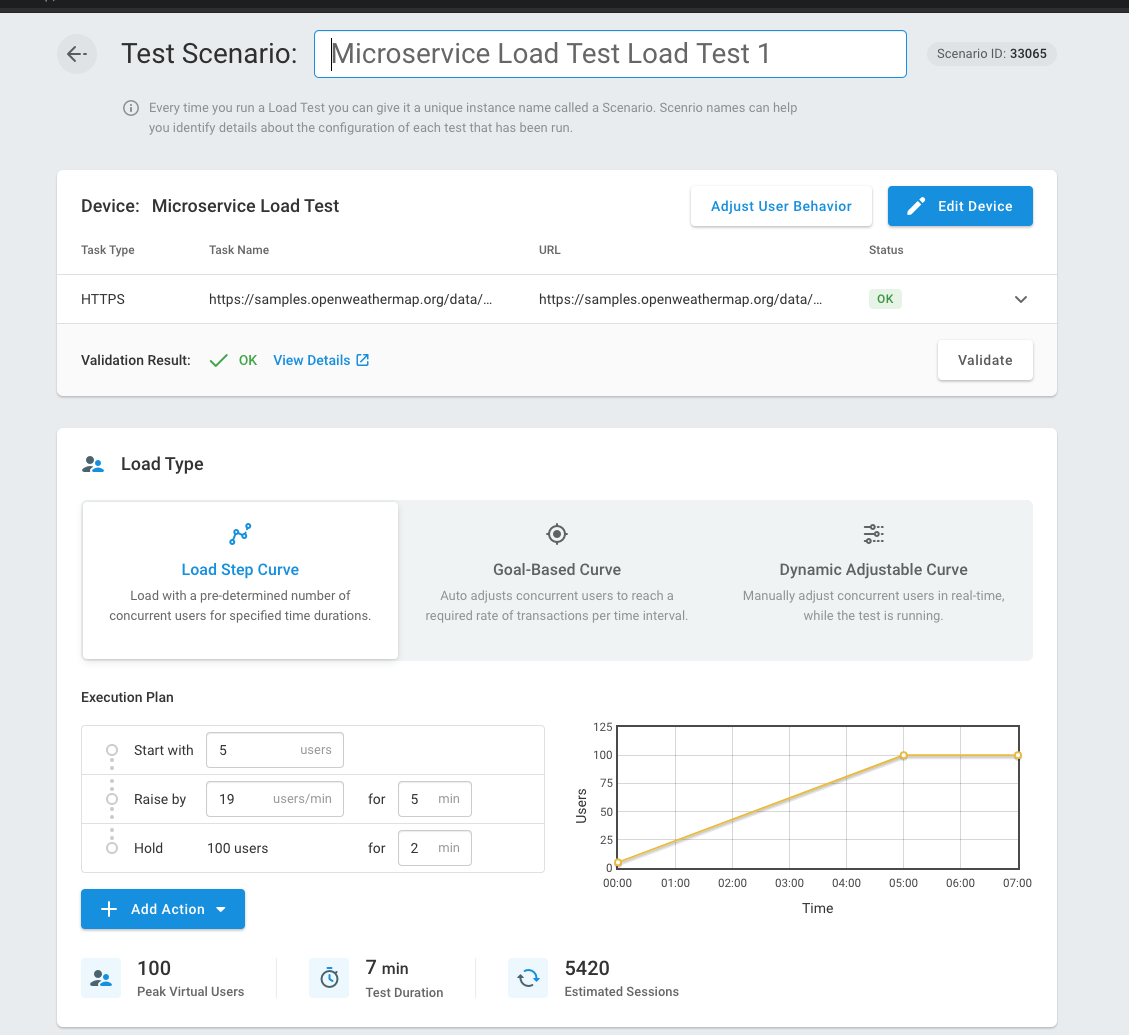

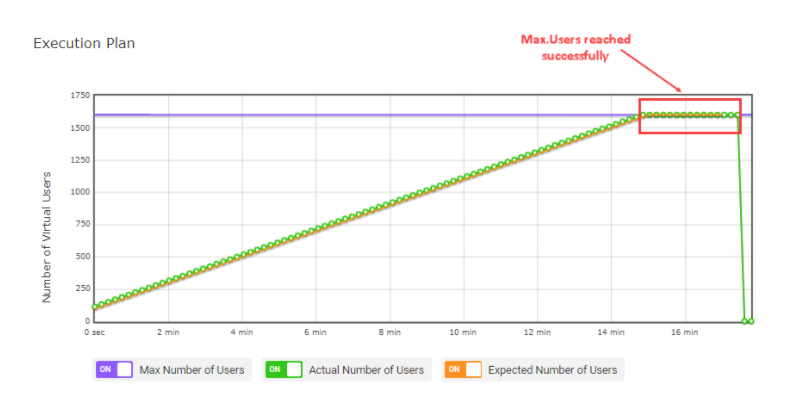

- Once we have successfully created a device, we would see the Test Scenario screen, where we can set Load Type, which will differ based on the goals of our test.

-

- Load Step Curve. This is to execute load tests with a known number of users and raise traffic after a predetermined warm up time.

- Goal-Based Curve. This test setup is used when we are looking for desired transactions per second for our specific API and want to scale to the desired rate slowly.

- Dynamic Adjustable based Curve. This set provides you to choose dynamic values in number of users, maximum users, and test duration.

- Based on the type of desired load test setup, we can select Continue, which will start execution of test with number of mentioned users and test duration.

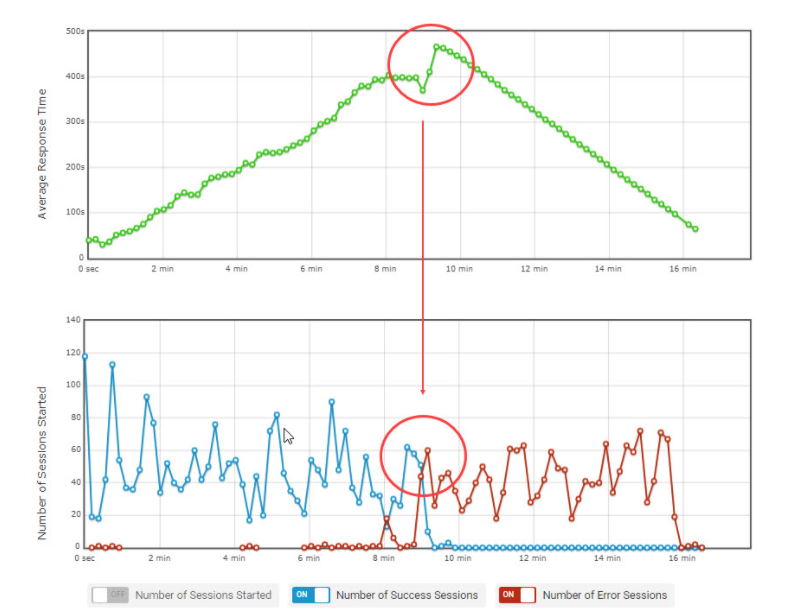

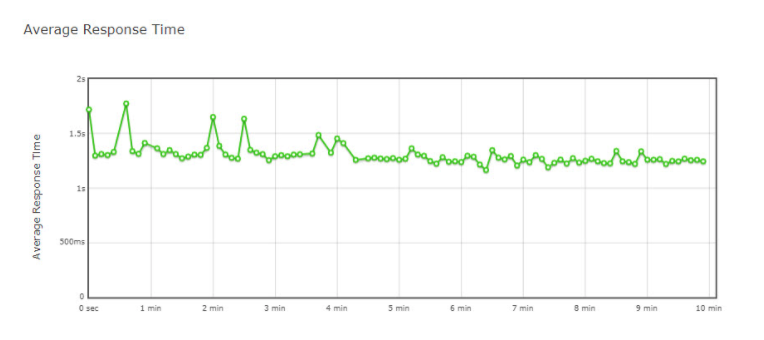

- After successful completion of load test run, we can see insights into how the system under test behaved. Metrics like response time graph, number of concurrent users graph, and error count sessions can be viewed and analyzed.

Microservices Application Load Testing: Conclusion

Projects utilizing microservice architecture are increasingly being used more frequently. For DevOps teams, that means another change in the normal testing process. Making sure your microservices are tested early and often is key to ensuring your applications will stand up to real-world scenarios and give you and your teams insight to any services that need fine-tuning before they’re put in production and into the hands of real users. Make sure you microservices applications can stand up to the demands of your users.

Please visit the LoadView site to learn more about the benefits and features of load testing and sign up for the free trial. You will get a free load test to get started. Or if you want to walk through the product with one of our performance engineers, sign up for a demo that fits into your schedule. Our team will be happy to talk about the platform and show you all the features it offers, like utilizing real browsers, access to over 20 load injector servers located around the world, and much more!